Photorealistic Rendering

2025

-

Physically-Based Rendering for VR

(work-in-progress)

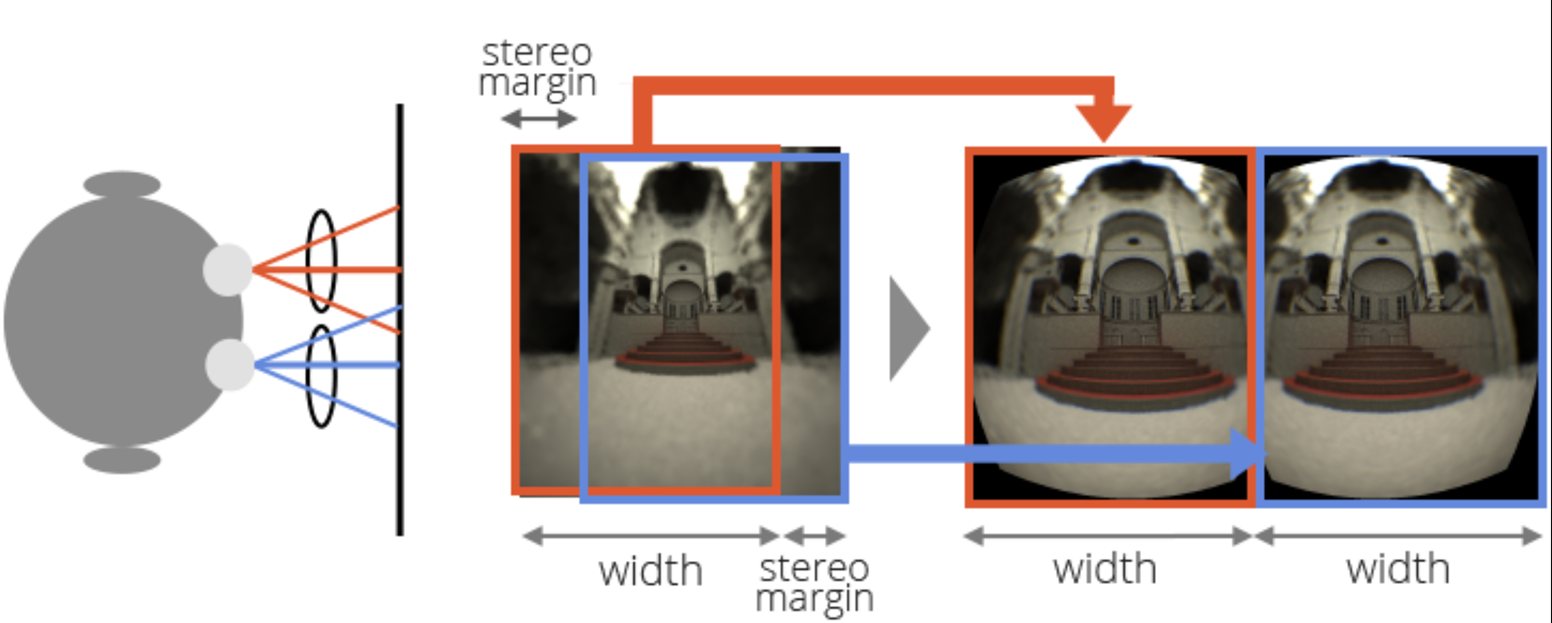

As per advantages of selecting ray-based rendering approach, they are more flexible than raster-based rendering and easy to setup for XR, and able to cast rays to arbitrary directions [1].

There is big chance of sample reuse between viewports (Figure 1). Moreover, not all rays contribute equally. Therefore less contribution rays could be omitted, focusing on more contribution rays. This leading us to the variable ray tracing.

Figure 1. Image courtesy of Takahiro Harada. References and Resources

[1] Takahiro Harada, Foveated Ray Tracing for VR on Multiple GPUs, GIGGRAPH Asia, 2014

-

Global Illumination Performance in Real-Time Context

(work in progress…)

Most of the Global Illumination (GI) genre algorithms suffer from convergence problem, make their application limited to the real-time applications. The Screen Space Ambient Occlusion (SSAO) and Screen Space Reflections (SSR) are also under the GI category and gives real-time performance. However, the light simulation is limited for both of the cases.

Some of the GI algorithms stand out for real-time performance could be:

- Neural Radiance Caching (NRC), better visual quality than ReSTIR with insignificant performance cost: On top of Path Tracing

- ReSTIR Genre (DI/GI): On top of Path Tracing

- Voxel-based GI, e.g., voxel Cone Tracing

- Radiance Cascades

- DDGI

References and Resources

- Lambru et al. (2021), Comparative Analysis of Real-Time Global Illumination Techniques in Current Game Engines (https://doi.org/10.1109/ACCESS.2021.3109663)

-

Volume Rendering

(work in progress…)

-

The Ray Marching

(work in progress…)

Ray Marching

Ray Marching is related to the

volume renderingwhere rays interact with different scene particles. According to the Ray Tracing Gems, “the alternative of ray marching is the volume collision simulation”.Ray marching (similiar to traditional ray tracing) is used for complex surface function. Ray tracing looks for the ray-object interaction only one way, while the ray marching can move forward and backward until it finds the intersection. The algorithm uses Signed Distance Function (SDF) to determine safe advance along the ray so it does not hit anything. But it is confined only to the analytical scene.

Sphere Tracing

Sphere tracing ([1]) is a variation of Ray Marching. However, according to Ray Tracing Gems,

sphere castingcould be more appropriate term.Resources

- John C. Hart, Sphere tracing: a geometric method for antialiased ray tracing of implicit surfaces (1996); url: https://graphics.stanford.edu/courses/cs348b-20-spring-content/uploads/hart.pdf

-

Ray-Based Rendering Generic Concepts and Terminologies

(work in progress…)

Shaders

Unlike the rasterization pipeline, the ray tracing-based algorithms has five types of shaders (generally). The computational expensive

pixel shaderin rasterization could be equivalent ofclosest-hitshader. The ray tracing-based pipeline’s shaders are more or less 3D graphics API agnostic. The five shaders are as follows:- The

ray generationshader defines how the ray tracing should start. It runs once per algorithm (per pass) - The

Intersectionshader/s define ray-object (aka. geometry) intersection. The shader is reusable - The

miss shader/sdefine the behavior when the ray miss hitting any geometry in the scene - The

closest hitshader/s run once per ray and shade the final hits - The

any-hitshader/s run once per hit and determine the transparency

The miss, closest hit, and any hit define the behavior of ray/s and may behave differently between primary, shadow, and indirect rays.

- The

-

Popular Global Illumination Real-Time Engines

Some Popular Real-Time GI Rendering Engines (todo: cross-ref)

- RTX Character Rendering (RTXCR): real-time path tracing

- Zorah: real-time neural rendering

-

Popular Global Illumination Offline and Real-Time Engines

Real-Time Path Tracing Engines and Frameworks

-

- G3D (no updated for long): path tracing included

- lighthouse2 (no updated for long): path tracing included

Production Renderer (Offline)

- Pixar RenderMan

- V-Ray

- Cycles-Blender, with Blender, use OptiX backend

- Autodesk Arnold, OptiX in backend

- The G3D Innovation Engine, OptiX backend

- NVidia IRay, OptiX backend

- Luxrender, OptiX back end

- Houdini, OptiX backend

- openmoonray, Embree

For Research

- Mitsuba 3 Physically Based Renderer

- Both the pbrt-v3 and pbrt-v4 excellent offline global illumination renderer, frequently being used for proof of concept

-

The Rendering Equation

(work in progress…)

The Rendering Equation

The rendering equation also referred as the

Light Transport Equation,Transport Equation(Ray Tracing Gems, sec. 01), Monte Carlo Simulation, Metropolis Algorithm for Monte Carlo. The rendering equation is among thetop 10 most influential algorithmsin computer science DONGARRA and Sullivan, 2000. The physical basis for the rendering equation is thelaw of energy conservation. In language, that is, Light exiting the surface = Emitted light + ((BRDF) * reflected incoming light * Light attenuation). In other words, theexistent radianceis the sum ofemittance + (reflectance * incidence * light attenuation). The equilibrium radiance leaving a point is the sum of emitted plus reflected radiance under a geometric optics approximation. We cannot solve the rendering equation analytically. However, develop an algorithm that can approximate the equation is the base of the wholeGlobal Illuminationgenre. One approach to solving the equation is based on finite element methods, leading to the radiosity algorithm. Another approach using Monte Carlo methods has led to many different algorithms including Path Tracing, photon mapping, and Metropolis light transport, and others.The

Notationof the rendering equation varies among different publications. Here are some of the popular notations:L_0(x,\overrightarrow{ω}) = L_e(x,\overrightarrow{ω}) + \int_{Ω} L_i(x, \overrightarrow{ω}^{'}) f_r(\overrightarrow{ω}, x, \overrightarrow{ω}^{'}) cos\theta d \overrightarrow{ω}^{'})L_0(x,ω_0) = L_e(x,ω_0) + \int_{Ω} L_i(x, ω_i) f_r(x, ω_i\rightarrow ω_0) (ω_i\cdot n) dω_iL_0(x,ω_0) =L_e(x,ω_0) + \int_{Ω} L_i(x, ω_i) f_r(x, ω_i, ω_0)(ω_i\cdot n) dω_iL_0(p,ω_0) = L_e(p,ω_0) + \int_{H^2} L_i(p, ω_i) f_r(p, ω_i \rightarrow ω_0) cos\theta dω_iL_0(p,ω_0) = L_e(p,ω_0) + \int_{S^2} L_i(p, ω_i) f(p, ω_i, ω_0) |cos(\theta_i)| dω_iL_0(ω_0) = L_e(ω_0) + \int_{Ω} L_i(ω_i) f(ω_i, ω_0) (ω_i\cdot n) dω_iL_e(x,v) = E(x,v) + \int_{Ω} L_i(x,ω) f_r(x,ω\rightarrow v) (cos\theta_x) dωL_o = L_e + \int_{Ω} L_i \cdot f \cdot cos\theta_x \cdot dωL_0(P,ω_0) = L_e(P,ω_0) + \int_{S^2} L_i(P, ω_i) f(P, ω_0, ω_i) |cos\theta_i| dω_iThe generic explanation is as follows:

- left hand side of the equation is the exiting radiance in point $x$ towards the outgoing $ω_0$ direction

- first part of the right hand side, is the emitted radiance in point $x$ towards the outgoing direction $ω_0$

- the integration part is the sum of the incoming radiance from all incoming $ω_i$ direction

- first part of the integral, the incoming radiance from direction $ω_i$. This part can be extended for each of the recursive bounce (ray depth)

- second part of the integral, is the BRDF

- third part of the integral, is the

Light Attenuation

-

Ray-Based Rendering Generic Overview

(work in progress…)

Ray-Based Rendering Approaches Generic Classification

All the

ray-based rendering pipeline, including ray casting, ray tracing, global illumination-based advanced algorithms including path tracing, photon mapping could be classified in these two generic categories. This classification is based on the light path direction. Light distribution in a scene is dynamic equilibrium, as much light is absorbed as is emitted.- Forward ray Tracing (aka. light tracing ); under global illumination category, it is also referred sometimes as the

forward light transport/ particle - Backward Ray Tracing (aka. reverse ray tracing); under global illumination category, it is also referred sometimes as the

backward light transport/ particle - Hybrid Ray Tracing

Forward Ray Tracing/ Light tracing

It follows the path of the light particles (photon) forward from the light source to the camera. It makes technically possible to simulate the right way light travels in nature on a computer. For instance, the sun emits about $10^{45}$ photons per second Ref. The chances of a random photon from the sun hitting the scene is tiny. About 1 in every $2.1\times10^9$ photons from the sun even hit the earth. About 1 in every $4.5\times10^{10}$ of those will hit the 100 ft (30 m) radius of the scene. The sun emits about $10^{45}$ photons per second.

However, this method inefficient and impractical, especially under the finite computational resources. As from the previous approach, we can see, there is a very low probability that the photon from the light would finally hit the virtual camera sensor.

Turner Whittedmentioned, in an obvious approach to ray tracing, that light rays emanating from a source are traced through their paths until they strike the viewer. Since only a few will reach the viewer, this approach is wasteful. However, in Whitted-style ray tracing, surfaces are treated asperfectly shinyandsmooth.Backward Ray Tracing/ Eye Tracing/ Camera Tracing/ Reverse Ray Tracing

Unlike real life, rays traverse the scene in reverse in the virtual world; start at the viewpoint and go towards the scene.

Appelalso suggested rays are traced in the opposite direction, from the viewer to the objects in the scene. So, we trace a ray from the eye (virtual camera) to a point on the object’s surface and then a ray from that point to the light source. The first ray we shoot from the eye is called theprimary ray/ visibility ray/ view ray/ camera ray. If the ray hits an object, then we find out how much light it receives by throwing another ray (called a light/ shadow ray) from the hit point to the scene’s light/s.Backward ray tracing is an efficient way of computing direct illumination indeed but not always an efficient way of simulating indirect lighting. Nonetheless, due to the efficiency, almost all the time, ray tracing and other global illumination algorithms are based on the

backward tracing. TheNext Event Estimationcould be used both of the forward and backward ray tracing for robust light sampling.Hybrid Ray Tracing

The forward ray tracing generates better result, however, requires more computational resources. On the other hand, the backward ray tracing is faster (compare to the forward), however, less vivid. Therefore, each of these type has their own limitations. A hybrid approach solves this problem. However, balancing between computational deman and visual quality is still a question for real-time rendering.

Resources

- https://cs.stanford.edu/people/eroberts/courses/soco/projects/1997-98/ray-tracing/types.html#:~:text=Types%20of%20Ray%20Tracing%20%20Forward%20Ray,Backward%20Ray%20Tracing.%20%20Hybrid%20Ray%20Tracing.

- Forward ray Tracing (aka. light tracing ); under global illumination category, it is also referred sometimes as the

-

Path Tracing: Ray Bounce Termination

(work in progress…)

Splitting

-

Ray Tracing Algorithm

(Work in progress…)

Ray Tracing Algorithm

TODO: explain mathematically

Ray Tracing Algorithms Classification

A proper classification could be difficult as it can be seen from many point of views.

Calculation of rays

Calculation of total rays per pixel, or frame, or per second (for videos) is very easy, for example, if we shoot

- $m$ rays per sample - $n$ samples per pixel - $o$ pixels per frame - $p$ frames per secondThe total number of rays per second will be $m\times n\times o\times p$. In real-time scenario, under very low ray count, we still requires billions of ray per second.

Biasness

Ray Tracing vs. Path Tracing

Resources and References

-

Path Tracing Algorithm

Reference and Resources

-

Ray Casting Algorithm

(work-in-progress)

Ray casting was a popular rendering technique in the 1990s. During that time, the ray-casting added more graphics fidelity over the conventional rasterization-based rendering pipeline. This is a ray-based rendering technique. However, ray-casting is non-recursive. After the first bounce, it is possible to check whether the hit point is directly illuminated by a light source or not by generating a shadow ray. Therefore, ray-casting is not considered under the

Global Illuminationgenre. Nonetheless, understanding this algorithm could assist in the learning curve of other ray-based algorithms, e.g., recursive ray tracing, global illumination algorithms (path tracing, photon mapping, ReSTIR etc). The ray casting also referred to theray shootingin the literature.Mathematical Details

Ray casting is a simple collision process to find the closest, or sometimes just any, object (any primitives, e.g., triangles) intersection along the ray/s. Generally, the algorithm shoots one or more rays. If a ray passing through a pixel and out into the 3D scene hits a primitive, then the distance along the ray from the origin to the primitive is determined, and the color data from the primitive contributes to the final color of the pixel. The final color, however, depends on the

normal vector at the intersection point, and we need thematerial properties(Seee More). As a part of shading the hit point, a new ray could be cast toward a light source to determine if the object is shadowed$^{[2]}$.TODO:

Difference Btw. Ray Casting and Ray Tracing

- Ray casting is only limited to the primary rays**, while

ray tracing shoots secondary raysfrom the intersect point. - The ray may also bounce and hit other objects and pick up color and lighting information from them

- Ray tracing** uses the

ray castingmechanism to recursively gather light contributions from reflection and refractive objects.

Resources

- F.Permadi’s Blog

- Ray Tracing Gems, Haines and Moller

- Ray casting is only limited to the primary rays**, while

-

Post Processing in Physically-Based Rendering

(work in progress…)

Tone Mapping

In Physically Based Rendering (PBR), tone mapping compresses the vast range of light intensities (High Dynamic Range (HDR)) calculated by the renderer into a display friendly range, often the Low Dynamic Range (LDR), ensuring details in both bright highlights and dark shadows remain visible, preventing washed-out or crushed images, and preserving the scene’s intended mood and visual fidelity for human perception (For more, click).

Some of the commonly used Tone Mappers are (see):

- Reinhard Global Operator

- Photographic Tone Reproduction (Modified Reinhard / Exposure-Based Operators)

- Filmic Tone Mapping (Hable / Uncharted 2 Curve)

- ACES (Academy Color Encoding System)

- Drago and Other Perceptual Methods

- Sigmoidal and Custom Parametric Curves

-

Variance, Noise, and Visual Artifacts in Real-Time Physically-Based Rendering

(work in progress…)

Noises are often referred to as error, visual artifacts,

variance(especially in physically-based rendering (PBR)). The limited sample number is primary constraints of the real-time PBR. Higher variance represents noiser outputs as the number of samples has not converged enough to the right result of the integral (Light Transport Equation). Consequently, sophisticated denoising algorithm plays significant role in real-time PBR. The denoising process sometimes referred to asreconstructionin the literature. The denoisers can also referred to as reconstruction filters (a convolution process).Firefly

- lighting artifact.

- a pixel that is unusually bright compared to the neighboring pixels, appearing much like a firefly in the dark.

- typically happens when a ray bounces off a surface and randomly hits a very bright, small light source.

- The small size of the light means it isn’t hit very often, but when it is, it contributes a large amount of energy to a pixel.

- read

Temporal stability

- Reproject past denoised frame

- To ensure temporal coherence, warp the previous frame’s denoised using screen-space motion vectors (motion vector estimation)

- Optical flow algorithms

- time warping

- Interactive Stable Ray Tracing

Resources and References

- Alian Galvan’s Ray Tracing Denoising blog

- Jacco Bikker’s Reprojection in a Ray Tracer blog

- NVidia OptiX AI-Accelerated a robust and low budged denoiser, however, limited to the offline PBR. A straight forward implementation could be find here. More, in the NVIdia-RTX git repository.

2023

-

Path Tracing Algorithm (Sampling)

(work in progress…)

Samplingis everywhere in physically-based rendering (aka. global illumination algorithms), e.g., path tracing. It is the starting quality control checkpost for path tracer. According to Haines and M"oller from theRay Tracing Gems Series(2019), “A well-designed sampling is complex, and it remains an active area of research”.So, what are the different sampling areas for a path tracer? Honestly, a straight answer could be difficult. Different researchers have answered it differently in their lecture notes. For example, Morgan McGuire divided sampling into:

- Direct illumination/ Light source sampling (TU Wien)

- Indirect illumination

- Pixel area

Prof. Matthias Teschner classified into:

- Sampling of the solid angle/ hemisphere

- Pixel space sampling

- Sampling of the lens (defocus blur/ DoF/ depth sampling)

- Sampling of time

Prof. Jacco Bikker (Utrecht University), NL

- Sampling area lights (soft shadows?)

- Sampling the hemisphere (diffuse reflections)

- Sampling the pixel (AA)

- Sampling the lens (DoF)

- Sampling time (motion blur)

Prof. Jan Kautz, UCL:

- Light sources (soft shadows?i guess, hard shadow, meaning direct lighting)

- Hemisphere (indirect lighting)

- BRDF (glossy, specular reflection, should be subclass of hemisphere sampling)

- pixel: antialiasing

- Lens (DoF)

- Time (Motion blur)

Prof. Pat Hanrahan (Stanford)

- reflection function produces a blurred reflection

- transmission function produces blurred transmission

- solid angle of light sources produces penumbras and soft shadows

- path accounts for interreflection

- in wavelength simulates spectral effects, e.g., dispersion

- a pixel over prefilters the image and reduce aliasing

- the camera aperture produces DoF

- in time produces motion blur

References and Resources

-

Ray-Based Rendering Learning Resources

(work in progress…)

I titled this post as

ray-basedlearning resources, which actually not exactly focus on the Whitted-style ray tracing or Cook-style recursive ray tracing algorithm. These resources agnostic and works for the commonray-basedrendering categories. The ray casting is also the earlier version of this category which has very limited applications nowadays. Contrarily, the global illumination (aka. Physically-Based Rendering, indirect illumination rendering) is the advanced branch of theray-basedrendering which generates physically-accurate light simulation under virtual environments. ThePath tracingis one of the most popular algorithms under this categories. It is mainly a discrete light simulation that approximately imitates the continuous real-world light interaction using the approximate physics and calculus algorithm. There are hundreds of algorithms underglobal illuminationcategories. Other than path tracing, thephoton mapping,Metropolis light transport,Radiosity,Ambient Occlusion,Dynamic Diffuse Global Illumination (DDGI), etc. are also very popular. However, each of the algorithms has their advantages and limitations. Therefore, in the professional-grade rendering engine, often hybrid approaches are used that combines multiple global illumination algorithms based on their superiority photorealistic effects.The primary goal of this blog is to gather available resources that may assist the newbies. The experts might find this redundant. Before further go, I will strongly recommend to explore the Real-Time Rendering Resources webpage which has been build over a long time and literally contains the best resources available in the internet.

Other than that, here is a lit of books that I found interesting to add in the learning process. The sequence is based on my personal choice. Some of the resources are free:

- Ray Tracing Gems Series

- Scratchapixel, Blog style

- Physically Based Rendering Book Online 👍🏼 👍🏼 Must Read

- The Graphics Codex 👍🏽 👍🏼, G3D rendering engine is really cool for real-time and cloud rendering with path tracing. However, as far as I know, the project has not been updated for long time (2025)

- Ray Tracing in One Weekend Series

- GPU Gems Series

Besides, if someone new to Rendering domain, you can follow:

- book

Fundamentals of Computer Graphics(the latest version) - Learn OpenGL, Must Read blog-style

Other than, the GDC Vault and Advances in Real-Time Rendering in Games are two excellent resources to grasp the latest knowledge and updates in real-time ray-based rendering approaches (especially, in global illuminations).

Computer Graphics YouTube Lecture Series Lectures:

- Cem Yuksel’s Lecture Series

- Justin Solmon’s Lecture

- Computer Graphics at TU Wien

- Ravi Ramamoorthi’s Lecture

- Keenan Crane’s Lecture

- Wolfgang Huerst’s Lecture Series

- Rajesh Sharma SIGGRAPH 2021

Blogs Ray and Path Tracing

- Jacco Bikker’s articles are really wonderful, especially

Lighthouse2real-time path tracing engine. However, if I am not wrong (2025s), the project later has not been continued - unbiased unidirectional path tracing in 99 lines of C++ 👍🏽 👍🏽

- The blog at the bottom of the sea 👍🏼

- agraphicsguynotes

- memoRandom, in Japanese

Ray Tracing on Weekend (RTOW) series

- Peter Shirley’s Ray Tracing in One Weekend Series

- CUDA (porting code C++ to CUDA already makes it 10X faster)

- OptiX

- Unity

- RTOW in Rust

Developer’s Forums